300 - Convolution network, gradient, tweaks, with pytorch#

Links: notebook, html, PDF, python, slides, GitHub

Object detection on CIFAR10.

Note: install tqdm if not

installed: !pip install tqdm

import time

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.autograd import Variable

print("torch", torch.__version__)

from torchvision import datasets, transforms

from tqdm import tqdm

torch 1.5.0+cpu

%matplotlib inline

BATCH_SIZE = 64

TEST_BATCH_SIZE = 64

DATA_DIR = 'data/'

USE_CUDA = False # switch to True if you have GPU

N_EPOCHS = 2 # 5

train_loader = torch.utils.data.DataLoader(

datasets.CIFAR10(DATA_DIR, train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=BATCH_SIZE, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.CIFAR10(DATA_DIR, train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=TEST_BATCH_SIZE, shuffle=True)

Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to data/cifar-10-python.tar.gz

HBox(children=(IntProgress(value=1, bar_style='info', max=1), HTML(value='')))

Extracting data/cifar-10-python.tar.gz to data/

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(500, 50)

self.fc2 = nn.Linear(50, 64)

def forward(self, x):

x = F.relu(F.max_pool2d(self.conv1(x), 2))

x = F.relu(F.max_pool2d(self.conv2_drop(self.conv2(x)), 2))

x = x.view(-1, 500)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

return F.log_softmax(x, dim=-1)

model = Net()

if USE_CUDA: model = model.cuda()

optimizer = optim.Adam(model.parameters())

def train(epoch, verbose=True):

model.train()

losses = []

loader = tqdm(train_loader, total=len(train_loader))

for batch_idx, (data, target) in enumerate(loader):

if USE_CUDA:

data, target = data.cuda(), target.cuda()

data, target = Variable(data), Variable(target)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

losses.append(float(loss.item()))

if verbose and batch_idx % 100 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

return np.mean(losses)

def test(verbose=True):

model.eval()

test_loss = 0

correct = 0

for data, target in test_loader:

if USE_CUDA: data, target = data.cuda(), target.cuda()

with torch.no_grad():

data = Variable(data)

target = Variable(target)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item() # sum up batch loss

pred = output.data.max(1, keepdim=True)[1] # get the index of the max log-probability

correct += pred.eq(target.data.view_as(pred)).cpu().sum().item()

test_loss /= len(test_loader.dataset)

if verbose:

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

return [float(test_loss), correct]

perfs = []

for epoch in range(1, N_EPOCHS + 1):

t0 = time.time()

train_loss = train(epoch, verbose=False)

test_loss, correct = test(verbose=False)

perfs.append([epoch, train_loss, test_loss, correct, len(test_loader.dataset), time.time() - t0])

print("epoch {}: train loss {:.4f}, test loss {:.4f}, accuracy {}/{} in {:.2f}s".format(*perfs[-1]))

100%|██████████| 782/782 [00:45<00:00, 17.31it/s]

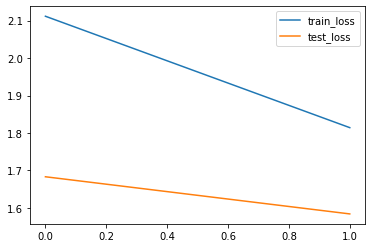

epoch 1: train loss 2.1118, test loss 1.6829, accuracy 3942/10000 in 49.14s

100%|██████████| 782/782 [00:41<00:00, 18.71it/s]

epoch 2: train loss 1.8141, test loss 1.5836, accuracy 4203/10000 in 45.54s

df_perfs = pd.DataFrame(perfs, columns=["epoch", "train_loss", "test_loss", "accuracy", "n_test", "time"])

df_perfs

| epoch | train_loss | test_loss | accuracy | n_test | time | |

|---|---|---|---|---|---|---|

| 0 | 1 | 2.111783 | 1.682875 | 3942 | 10000 | 49.143509 |

| 1 | 2 | 1.814102 | 1.583610 | 4203 | 10000 | 45.540153 |

df_perfs[["train_loss", "test_loss"]].plot();